Deep Learning Explained

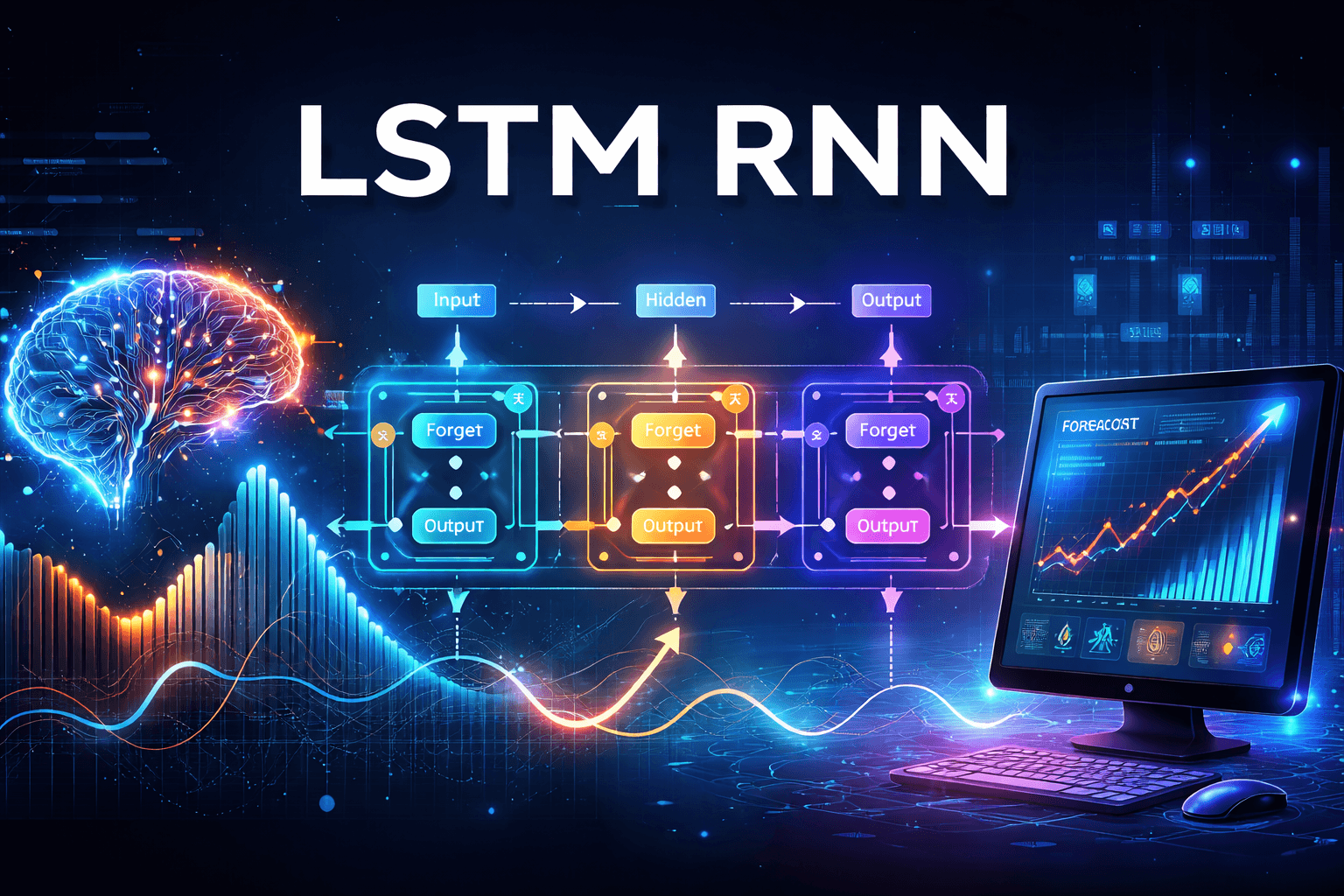

In the realm of popular neural network architectures, a diverse array of layer types is employed, each serving a distinct purpose. In this blog, we will delve into one of the most fundamental components: the linear layer. This layer is characterized by a structure in which each neuron, also known as a perceptron, in the preceding layer is intricately connected to every neuron in the subsequent layer. This design is commonly referred to as a fully connected layer, given that all neurons engage in interactions with one another across layers.

Forward Propagation

To better understand this, consider that if the preceding layer consists of ( m ) neurons and the following layer has ( n ) neurons, the network establishes a total of ( mn ) individual connections. Each of these connections carries its own unique weight, which plays a critical role in determining how information is processed as it flows through the network. This foundational layer effectively facilitates the transmission of signals, allowing for complex computations and ultimately contributing to the learning capabilities of the neural network.

The weight associated with the connection between the kth neuron in the previous layer \(\textbf{l-1}\) and the jth neuron in the current layer \(\textbf{l}\) is denoted as \(\mathbf{w_{jk}^l}\). This weight represents the strength of the influence that the kth neuron has on the activation of the jth neuron, playing a crucial role in the computations performed by the neural network.

Single perceptron at layer l

Neurons are depicted in circles and jth neuron in layer l as red circle

A parameterized function is a mathematical construct that processes an input to yield a specific decision or estimate. One of the most basic forms of this function is the weighted (w) sum of inputs, augmented by a bias term (b). This approach assigns varying levels of importance to each input before they are combined, enabling a more nuanced evaluation of how each input contributes to the overall outcome. Nonlinearity is introduced through functions such as the sigmoid function. The weights w0, w1 and the bias b are the parameters of the function

Let \(\mathbf{a_0^{l-1}}\), \(a_1^{l-1}\) …. , \(\mathbf{a_m^{l-1}}\) are the outputs of m neurons in layer l-1. and \(\mathbf{a_0^{l}}\), \(\mathbf{a_1^{l}}\)… \(\mathbf{a_n^{l}}\) are the outputs of n neurons at layer l.

Considering the jth neuron in layer l

Parameterized model function in layer l for jth neuron is defined as

\(\mathbf{f_{w,b}(a)}=\mathbf{z_j^l= \sum_{k=0}^m w_{jk}^l a_k^{l-1} +b_j^l}\) where the number of neurons in layer l-1 and l are m and n

In dot product this can be represented as

\(\mathbf{z_j^l=[ w_{j0}^l\enspace w_{j1}^l \enspace ... w_{jm}^l] \begin{bmatrix} a_0^{l-1} \\\\ a_1^{l-1} \\\\..... \\\\ a_m^{l-1} \end{bmatrix}+b_j^l}\)

And the activation vector which is the output at layer l for jth neuron is derived by applying activation function on the model function \(z_j^l\)

\(\mathbf{a_j^l=\sigma(z_j^l)}\)

For all \(\mathbf{j=0....n}\) , here arrow as superscript is used to depict Vector, and can be written as

\(\mathbf{\vec{z}^{\,l}=W^l \enspace \vec{a}^{\,l-1}+\vec{b}^{\,l}}\)

\(\mathbf{\vec{a}^{\,l}=\sigma(\vec{z}^{\,l})}\) ……………………………………………… \(\mathbf{(1)}\)

Here \(\mathbf{W^l}\) is an n × m matrix representing the weights of all connections from layer l − 1 to layer l

\(W^l=\begin{bmatrix} w_{00}^{l} \enspace w_{01}^{l} \enspace .... \enspace w_{0m}^{l} \\\\ w_{10}^{l} \enspace w_{11}^{l} \enspace .... \enspace w_{1m}^{l} \\\\..... \\\\ w_{n0}^{l} \enspace w_{n1}^{l} \enspace .... \enspace w_{nm}^{l}\end{bmatrix}\) and \(\vec{a}^{\,l-1}=\begin{bmatrix} a_0^{l-1} \\\\ a_1^{l-1} \\\\..... \\\\ a_m^{l-1} \end{bmatrix}\)and \(\vec{b}^{\,l}=\begin{bmatrix} b_0^{l} \\\\ b_1^{l} \\\\..... \\\\ b_m^{l} \end{bmatrix}\) and

\(\sigma(\vec{z}^{\,l})= \begin{bmatrix} \sigma (z_0^{l}) \\\\ \sigma (z_1^{l}) \\\\..... \\\\ \sigma (z_n^{l}) \end{bmatrix}\)

Equation 1 details the process of forward propagation within a single linear layer of a neural network for example layer \(\mathbf{l}\) as shown above. In the context of a multilayer perceptron (MLP), which consists of a series of fully connected layers ranging from layer 0 to layer L, the final output can be achieved by continually applying this equation to the input data. Each application of the equation transforms the input, allowing information to flow through the network and ultimately produce the desired output.

This expression is evaluated incrementally through the repeated application of the linear layer.

\(\mathbf{\vec{a}^{\,0}=\sigma(W^0 \enspace \vec{x}+\vec{b}^{\,0})}\)

\(\mathbf{\vec{a}^{\,l}=\sigma(W^1 \enspace \vec{a^0}+\vec{b}^{\,1})}\)

……………………

\(\mathbf{\vec{a}^{\,L}=\sigma(W^1 \enspace \vec{a^{L-1}}+\vec{b}^{\,L})}\)

Backward Propagation

Loss function and training

In the context of neural networks, let ( y ) represent the output generated by the model, while \(( \hat{y} ) \) signifies the actual or ground truth value that we aim to predict. To quantify the difference between these two values, we utilize a common metric known as the mean squared error (MSE). This loss function is expressed mathematically as \(\mathbf{ (y - \hat{y})^2 }\), which calculates the square of the difference between the predicted output and the true value. For now, for simplicity we have selected the MSE as our primary loss function for training the neural network.

\(L= \mathbf{\frac{1}{2} \sum_{k=0}^m (y-\hat{y})^2}\) ………………………………………………………… \(\mathbf{(2)}\)

We can transform each layer's weight matrix, denoted as \(\mathbf{w^l}\), along with its corresponding bias \(\mathbf{b^l}\), into individual vectors. After this conversion, we concatenate the vectors from all layers in sequence , resulting in a single, extensive vector that encompasses all the weights and biases throughout the multilayer perceptron (MLP). This concatenated vector one for weights and other for bias serves as a unified representation of the entire model's parameters, facilitating efficient processing and optimization during training.

\(\mathbf{\vec{w}=[ w_{00}^0\enspace w_{01}^0 \enspace ..... w_{00}^1 w_{01}^1\enspace ... \enspace w_{00}^L w_{01}^L \enspace ..]}\)

\(\mathbf{\vec{b}=[ b_{0}^0\enspace b_{1}^0 \enspace ..... b_{0}^1 b_{1}^1\enspace ... \enspace b_{0}^L b_{1}^L \enspace ..]}\)

The primary objective of training is to discover the optimal parameters and configurations that will effectively reduce the loss (equation 2) to its lowest possible level.

We begin by calculating the gradients of the loss function in relation to the weights and biases of our model. These gradients indicate the direction and rate at which we should adjust the weights and biases to minimize the loss. To refine our model, we update the weights and biases by a value that is proportional to these computed gradients. By repeatedly performing this update process, we progressively move toward the minimum point of the loss function, ultimately leading to improved model performance.

The equations for updating weights and biases in gradient descent are

\(\mathbf{\vec{w}=\vec{w}- \lambda \frac{\partial L}{\partial w_{jk}^l} }\) for all l,j,k

\(\mathbf{\vec{b}=\vec{b}- \lambda \frac{\partial L}{\partial b_{j}^l} }\) for all j,l ……..……………………………………… \(\mathbf{(3)}\)

Equations to updating individual weights and biases using individual partial derivatives are

\(\mathbf{w_{jk}^l=w_{jk}^l- \lambda \frac{\partial L}{\partial w_{jk}^l} }\) for all l, j, k

\(\mathbf{b_j^l=b_j^l- \lambda \frac{\partial L}{\partial b_{j}^l} }\) …………………………………………………………. \(\mathbf{(4)}\)

Gradient descent is an iterative optimization algorithm that updates the weights and biases of a model to minimize the loss function. It accomplishes this by applying equation \(\mathbf{(3)}\) in each iteration, allowing for systematic adjustment of the model parameters based on the calculated error. This method is essentially equivalent to updating each weight and bias individually by utilizing their specific partial derivatives, By doing so, gradient descent effectively fine-tunes the model, gradually improving its performance with each update.

Back propagation with single neuron par layer

We will assess back propagation on a simple perceptron that consists of only one neuron per layer. This simplification allows us to avoid using subscripts for individual weights and biases, as there is only one weight and one bias between two consecutive layers. We will use superscripts to indicate the layer. We will employ Mean Squared Error (MSE) as our loss function and will focus on a single input-output pair, denoted as \(\mathbf{x_i}\) and \(\mathbf{y_i}\). The total loss L, which represents the summation across all training data instances, can be easily derived by applying the same steps repeatedly.

Forward propagation for an arbitrary layer l is defined as

\(\mathbf{z^l=w^l a^{l-1} + b^l}\) and \(\mathbf{a^l=\sigma(z^l)}\) …………………………………………….. \(\mathbf{(5)}\)

Loss function for a given \(\mathbf{(x_i,y_i)}\) is \(L= \mathbf{\frac{1}{2} (a^L-\hat{y_i})^2}\) where L is last layer ………………………… \(\mathbf{(6)}\)

Partial derivative of loss with respect to the weights (w) for the last layer, L

\(\mathbf{\frac{\partial L}{\partial w^L}= \frac{\partial L}{\partial z^L}\frac{\partial z}{\partial w^L}= \frac{\partial L}{\partial z^L} a^{L-1}}\) ………………………………………. \(\mathbf{(7)}\)

\(\mathbf{\frac{\partial z}{\partial w^L}= a^{L-1}}\) and ………………………………… \(\mathbf{(8)}\)

\(\mathbf{\frac{\partial L}{\partial z^L}= \frac{\partial L}{\partial a^L} \frac{\partial a^L}{\partial z^L}}\) Using the chain rule for partial derivatives ………………………….. \(\mathbf{(9)}\)

\(\mathbf{\frac{\partial L}{\partial a^L}= (a^L-\hat{y_i}) }\) ………………………………………….. \(\mathbf{(10)}\)

\(\mathbf{\frac{\partial a^L}{\partial z^L}= \frac{\partial \sigma(z^L)}{\partial z^L} }\) ………………………………………………..…. \(\mathbf{11)}\)

The backpropagation algorithm is a powerful tool used in training artificial neural networks, and its effectiveness is not limited to just the sigmoid \(\mathbf{\sigma}\) activation function. In fact, back propagation can work well with a variety of activation functions, including ReLU (Rectified Linear Unit), tanh (hyperbolic tangent), and softmax, among others. Each of these functions has unique properties that can lead to improved performance in different contexts. For instance, while the sigmoid function is helpful for binary classification tasks, the ReLU function is often preferred in deeper networks because it mitigates issues related to vanishing gradients, allowing for faster convergence. The flexibility of back propagation in accommodating multiple activation functions enables it to be applied across a wide range of neural network architectures and applications, enhancing its versatility and effectiveness in machine learning.

These functions possess the characteristic of maintaining a nonzero derivative throughout their entire domain, which enables the gradient descent algorithm to consistently make progress at each iteration. This ensures that the optimization process can effectively navigate the function landscape without encountering flat regions, allowing for a more fluid and efficient convergence toward the optimal solution.

Some popular activation functions and their derivatives are shown below.

For our current use case we have picked sigmoid activation function.

Lets calculate the derivative of sigmoid function for a variable x

\(\mathbf{\frac{d \sigma(x)}{ dx}=\frac{d( (1+e^{-x} )^{-1})}{dx} =(\frac{-1}{ (1+e^{-x})^{-2} }) \frac{d}{dx}((1+e^{-x}))=(\frac{-1}{ (1+e^{-x})^{-2} }) ((-e^{-x}))=\sigma(x)(1-\sigma(x))}\)now replacing x with \(z^L\)

\(\mathbf{\frac{\partial a^L}{\partial z^L}= \frac{\partial \sigma(z^L)}{\partial z^L} =\sigma(z^L)(1-\sigma(z^L))=a^L(1-a^L)}\) …………………………… \(\mathbf{(12)}\)

Now substituting \(\mathbf{(10)}\) and \(\mathbf{(12)}\) in \(\mathbf{(9)}\) we get

\(\mathbf{\frac{\partial L}{\partial z^L}= (a^L-\hat{y_i})( a^L(1-a^L)) }\) ………………………………………………. \(\mathbf{(13)}\)

Now substituting \(\mathbf{(8)}\) and \(\mathbf{(13)}\) in equation \(\mathbf{(7)}\) we get

\(\mathbf{\frac{\partial L}{\partial w^L}= a^{L-1}(a^L-\hat{y_i})(a^L(1-a^L)) }\) ………………………………………. \(\mathbf{(14)}\)

Partial derivative of loss with respect to the bias (b) for the last layer, L

\(\mathbf{\frac{\partial L}{\partial b^L}= \frac{\partial L}{\partial z^L}\frac{\partial z}{\partial b^L}= \frac{\partial L}{\partial z^L}. 1}\) ………………………………………………………. \(\mathbf{(15)}\)

so \(\mathbf{\frac{\partial L}{\partial b^L}=(a^L-\hat{y_i})(a^L(1-a^L))}\) …………………………………………. \(\mathbf{(16)}\)

Weights and biases in equation \(\mathbf{(4)} \) get adjusted as shown below using equation \(\mathbf{(14)}\) and \(\mathbf{(16)}\)

\(\mathbf{w^L=w^L- \lambda \frac{\partial L}{\partial w^L} }\)

\(\mathbf{b^L=b^L- \lambda \frac{\partial L}{\partial b^L} }\)

Where \(\mathbf{\lambda}\) is the learning rate parameter which decides how big is step, taken in gradient decent.

After adjusting the weights and biases in the last layer L of the neural network, we begin the process of back propagation. This involves systematically moving backwards through each preceding layer, making necessary adjustments to both the weights and biases. We continue this process layer by layer until we reach the very first layer of the network, ensuring that each component is fine-tuned to improve the overall performance of the model.