Implementing LSTM RNN using Pytorch

Previously, I wrote an article titled "Recurrent Neural Network," where I delved into the inner workings of Recurrent Neural Networks (RNNs) and their significance in the field of machine learning. Subsequently, I provided a tutorial “Implementing LSTM RNN using Keras and TensorFlow” that illustrated how to implement RNNs using popular deep learning libraries, Keras and TensorFlow. In this blog post, I am excited to take it a step further by guiding you through the implementation of Long Short-Term Memory (LSTM) networks, a specific type of RNN, using PyTorch. LSTMs are particularly effective in handling sequential data, and I look forward to exploring this powerful tool with you.

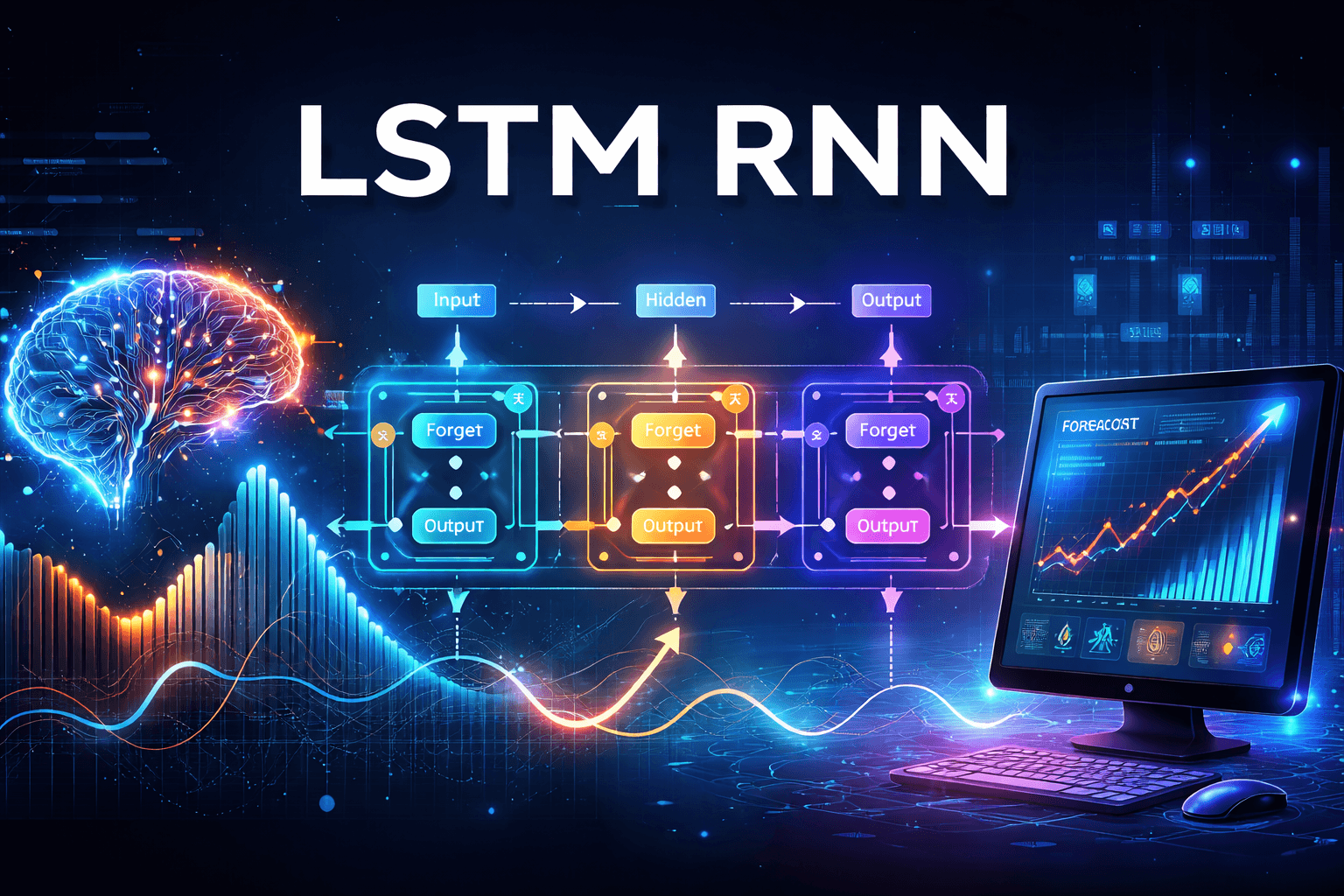

I am including a diagram from my previous blog post that illustrates the architecture of Long Short-Term Memory (LSTM) networks. This will help to refresh your memory and provide a solid foundation as we begin writing the code.

In general the current input vector and the previous short-term state/hidden state are fed to four different fully connected layers.

The main layer which outputs candidate value is responsible for outputting . It analyzes the current inputs and the previous short-term/hidden state . The most important information is stored in the long-term state while the rest is discarded.

The three other layers(forget, update and output) are gate controllers. Since they use the logistic activation function sigmoid , the outputs range from 0 to 1. As you can see, the gate controllers’ outputs are fed to element-wise multiplication operations: if they output 0s they close the gate, and if they output 1s they open it. Specifically:

The forget gate (controlled by ) controls which parts of the long-term state should be erased.

The update gate (controlled by ) controls which parts of should be added to the long-term state.

Finally, the output gate (controlled by ) controls which parts of the long-term state should be read and output at this time step, both to and to .

Let's try to understand this content through the image shown below for simple RNN cell

Input Data Block (Left)

Shape: (Batch Size, Time Steps, Num Features)

This cube represents your multivariate time-series input:

Batch: Multiple sequences processed together.

Time Steps (Window Size): How far back in time you look (T).

Features: The number of variables at each time step (D).

👉 At each time step ( t ), the model sees all features together, not one at a time.

Feature Vector at Each Time Step

The feature vectors are represented as follows:

\(( x_1 = [f_1, f_2, f_3, \ldots, f_D] )\)

\(( x_2 = [f_1, f_2, f_3, \ldots, f_D] )\)

...

\(( x_T = [f_1, f_2, f_3, \ldots, f_D] )\)

This means:

At time step ( t ), the input consists of one vector of ( D ) features.

There is NOT one RNN per feature; all features are fed simultaneously into the same RNN cell.

RNN Cells (Middle/Right)

Each box labeled “RNN Cell (One Shared Network)” represents the same RNN, reused at every time step.

Important points:

The boxes are drawn multiple times only to illustrate time unfolding.

Weights are shared across all time steps.

This is one RNN, not many.

Mathematically:

\(( h_t = RNN(x_t, h_{t-1}) )\)

Where:

\(( x_t )\) = all features at time ( t )

\(( h_t ) \) \= hidden state at time ( t )

Hidden States \(\mathbf{(( h_1, h_2, \ldots, h_T ))}\)

Each RNN cell outputs:

- \(( h_1, h_2, h_3, \ldots, h_T )\)

These represent:

The model's memory after processing data up to that time.

Each \(( h_t )\) is typically a vector of size "hidden_size."

Hidden states:

Change at every time step.

Carry temporal information forward.

At every time step, one RNN processes all features together, updates its memory, and passes that memory to the next time step using the same weights.

Implementation

Ihave utilized Jupyter Notebook, which is installed on my Mac, to run my code. However, an excellent alternative is Google Colab, which allows for seamless execution of the code presented in this blog.

In order to work with the code effectively, there are specific packages that I need to install within my virtual environment. These packages include:

PyTorch: A powerful deep learning library that provides flexibility and ease of use for building and training neural networks.

TorchMetrics: A library that offers a wide range of metrics specifically designed for evaluating the performance of generative models.

Loading packages

import torch

import torch.nn as nn

import torch.optim as optim

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from torch.utils.data import DataLoader

from pathlib import Path

import tarfile

import urllib.request

import torchmetrics

Leveraging GPU/MPS to boost performance

if torch.cuda.is_available():

device = "cuda"

elif torch.backends.mps.is_available():

device = "mps"

else:

device = "cpu"

device

Download the data

def download_and_extract_ridership_data():

tarball_path = Path("datasets/ridership.tgz")

if not tarball_path.is_file():

Path("datasets").mkdir(parents=True, exist_ok=True)

url = "https://github.com/learner14/data"

urllib.request.urlretrieve(url, tarball_path)

with tarfile.open(tarball_path) as housing_tarball:

housing_tarball.extractall(path="datasets", filter="data")

download_and_extract_ridership_data()

path = Path("datasets/ridership/CTA_-_Ridership_-_Daily_Boarding_Totals.csv")

df = pd.read_csv(path, parse_dates=["service_date"])

df.columns = ["date", "day_type", "bus", "rail", "total"] # shorter names

df = df.sort_values("date").set_index("date")

df = df.drop("total", axis=1)

df = df.drop_duplicates()

Lets look at the first 5 data rows

df.head()

day_type bus rail

date

2001-01-01 U 297192 126455

2001-01-02 W 780827 501952

2001-01-03 W 824923 536432

2001-01-04 W 870021 550011

2001-01-05 W 890426 557917

Plotting a sample to look at data

plt.rc('font', size=14)

plt.rc('axes', labelsize=14, titlesize=14)

plt.rc('legend', fontsize=14)

plt.rc('xtick', labelsize=10)

plt.rc('ytick', labelsize=10)

df["2021-03":"2021-05"].plot(grid=True, marker=".", figsize=(8, 3.5))

plt.show()

Data Analysis

Here is a comprehensive analysis of the time series data concerning Bus and Rail ridership from approximately March to May 2021.

Daily Time Series:

Bus Ridership (blue): Ranges from about 160,000 to 360,000.

Rail Ridership (orange): Ranges from approximately 95,000 to 225,000.

Key Observations:

Strong Weekly Seasonality: Both bus and rail ridership exhibit a pronounced pattern of weekly fluctuations.

Upward Trend: There is a noticeable increase in ridership over time for both modes of transport.

Highly Synchronized Dips: Whenever bus ridership declines, rail ridership also tends to decline, and vice versa during increases.

Increasing Variability Over Time: The variability in ridership data appears to be growing.

Given these characteristics, multivariate forecasting models, such as the Multivariate Long Short-Term Memory (LSTM) model, are likely to perform better than univariate models in predicting future ridership trends.

Preparing data for model

Lets create Multivariate Dataset for train and bus

df_mulvar = df[["rail", "bus"]] / 1e6 # use both rail & bus series as input

df_mulvar.head()

First 5 rows are shown below

rail bus

date

2001-01-01 0.126455 0.297192

2001-01-02 0.501952 0.780827

2001-01-03 0.536432 0.824923

2001-01-04 0.550011 0.870021

2001-01-05 0.557917 0.890426

we add day_type in data as one hot encoding for three day_type .

next_day_type_A: for saturday

next_day_type_U : for sunday and holiday

next_day_type_W.: for weekdays

df_mulvar["next_day_type"] = df["day_type"].shift(-1) # we know tomorrow's type

df_mulvar = pd.get_dummies(df_mulvar, dtype=float) # one-hot encode day type

df_mulvar.head()

rail bus next_day_type_A next_day_type_U next_day_type_W

date

2001-01-01 0.126455 0.297192 0.0 0.0 1.0

2001-01-02 0.501952 0.780827 0.0 0.0 1.0

2001-01-03 0.536432 0.824923 0.0 0.0 1.0

2001-01-04 0.550011 0.870021 0.0 0.0 1.0

2001-01-05 0.557917 0.890426 1.0 0.0 0.0

The following code generates sliding

windows from a time series, which can be used for predicting the next value in the sequence. The input parameters consist of the time series data itself and a specified window length, denoted as T. This method enables the extraction of overlapping segments of the series, facilitating the analysis and forecasting of future data points based on the patterns observed in these windows.

class MulvarTimeSeriesDataset(TimeSeriesDataset):

def __getitem__(self, idx):

window, target = super().__getitem__(idx)

return window, target[:2]

We now create train, validation, and test tensors for rail ridership through date slicing. Using pandas' label-based time slicing, the command df[["rail"]]["2016-01":"2018-12"] selects the 'rail' column for the specified date range.

Next, we apply unit scaling by dividing the raw rider counts by 1 million (1e6). This conversion helps keep the values numerically smaller, which contributes to training stability.

The splits are as follows:

rail_train: January 2016 to December 2018

rail_valid: January 2019 to May 2019

rail_test: June 2019 to the end of the data

Typically, these tensors can be fed into a TimeSeriesDataset with a specified window_length to generate sliding windows for next-day predictions.

mulvar_train = torch.FloatTensor(df_mulvar["2016-01":"2018-12"].values)

mulvar_valid = torch.FloatTensor(df_mulvar["2019-01":"2019-05"].values)

mulvar_test = torch.FloatTensor(df_mulvar["2019-06":].values)

Create TimeSeriesDataset for train, validate and test for rail ridership

window_length = 56

mulvar_train_set = MulvarTimeSeriesDataset(mulvar_train, window_length)

mulvar_train_loader = DataLoader(mulvar_train_set, batch_size=32, shuffle=True)

mulvar_valid_set = MulvarTimeSeriesDataset(mulvar_valid, window_length)

mulvar_valid_loader = DataLoader(mulvar_valid_set, batch_size=32)

mulvar_test_set = MulvarTimeSeriesDataset(mulvar_test, window_length)

mulvar_test_loader = DataLoader(mulvar_test_set, batch_size=32)

Create following functions to evaluate and train the model(LSTM model).

evaluate_tm(model, data_loader, metric)

train(model, optimizer, loss_fn, metric, train_loader, valid_loader, n_epochs, patience=10, factor=0.1)

eval_tm function(model, data_loader, metric)

The following process evaluates a trained model on a dataset and returns a performance metric (e.g., Mean Absolute Error (MAE) or Accuracy) without updating the model weights.

Evaluation Mode: Use

model.eval()to set the model in evaluation mode. This adjusts the behavior of layers like dropout and batch normalization to ensure consistent results during inference.No Gradients: Employ

torch.no_grad()to disable gradient tracking. This saves memory and speeds up the evaluation process.Batch Loop: For each batch of data:

Move

X_batchandy_batchto the appropriate device (e.g., GPU).Compute predictions:

y_pred = model(X_batch).Update the performance metric using the predictions and the true targets.

Metric Lifecycle:

Call

metric.reset()to clear any prior state.Use

metric.compute()to return the aggregated score across all batches.

Inputs:

model(an instance ofnn.Module)data_loader(provides batches for evaluation)metric(an object fromtorchmetrics)

Output: The evaluation process outputs a single scalar metric that summarizes the model's performance over the entire data loader.

def evaluate_tm(model, data_loader, metric):

model.eval()

metric.reset()

with torch.no_grad():

for X_batch, y_batch in data_loader:

X_batch, y_batch = X_batch.to(device), y_batch.to(device)

y_pred = model(X_batch)

metric.update(y_pred, y_batch)

return metric.compute()

train(model, optimizer, loss_fn, metric, train_loader, valid_loader, n_epochs, patience=10, factor=0.1)

This function orchestrates the model training process while tracking metrics and adapting the learning rate.

Inputs: It requires the following parameters: model, optimizer, loss_fn, metric, train_loader, valid_loader, n_epochs, patience, and factor.

Scheduler: The function employs ReduceLROnPlateau with mode="min" to decrease the learning rate when there is no improvement in the validation metric.

Loop: For each epoch, the function computes y_pred, calculates the loss, performs backpropagation (loss.backward()), updates the model parameters (optimizer.step()), resets the gradients (optimizer.zero_grad()), and updates the metric.

Logging: It records the average training loss and training metric, evaluates the validation metric using evaluate_tm(), and prints a concise summary of the epoch.

Output: The function returns a history object containing train_losses, train_metrics, and valid_metrics, which can be used for plotting and analysis.

def train(model, optimizer, loss_fn, metric, train_loader, valid_loader,

n_epochs, patience=10, factor=0.1):

scheduler = torch.optim.lr_scheduler.ReduceLROnPlateau(

optimizer, mode="min", patience=patience, factor=factor)

history = {"train_losses": [], "train_metrics": [], "valid_metrics": []}

for epoch in range(n_epochs):

total_loss = 0.0

metric.reset()

model.train()

for X_batch, y_batch in train_loader:

X_batch, y_batch = X_batch.to(device), y_batch.to(device)

y_pred = model(X_batch)

loss = loss_fn(y_pred, y_batch)

total_loss += loss.item()

loss.backward()

optimizer.step()

optimizer.zero_grad()

metric.update(y_pred, y_batch)

history["train_losses"].append(total_loss / len(train_loader))

history["train_metrics"].append(metric.compute().item())

val_metric = evaluate_tm(model, valid_loader, metric).item()

history["valid_metrics"].append(val_metric)

scheduler.step(val_metric)

print(f"Epoch {epoch + 1}/{n_epochs}, "

f"train loss: {history['train_losses'][-1]:.4f}, "

f"train metric: {history['train_metrics'][-1]:.4f}, "

f"valid metric: {history['valid_metrics'][-1]:.4f}")

return history

Utility function fit_and_evaluate() which will be called to train the model.

Trains a model on

train_loader, evaluates onvalid_loader, and returns the best validation score scaled back to riders.Loss: Uses

nn.HuberLoss()for robustness to outliers compared to MSE.Optimizer: Applies

SGDwith momentum0.95and user-provided learning ratelrfor stable convergence.Metric: Tracks

torchmetrics.MeanAbsoluteError(MAE) on the activedevice.Training: Delegates to

train()withn_epochs,patience, andfactor(forReduceLROnPlateauinsidetrain()), recording loss and metrics each epoch.Returns

min(history["valid_metrics"]) * 1e6— the best validationMAErescaled from “millions” back to riders for an intuitive number.

# extra code – defines a utility function we'll reuse several time

def fit_and_evaluate(model, train_loader, valid_loader, lr, n_epochs=50,

patience=20, factor=0.1):

loss_fn = nn.HuberLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=lr, momentum=0.95)

metric = torchmetrics.MeanAbsoluteError().to(device)

history = train(model, optimizer, loss_fn, metric,

train_loader, valid_loader, n_epochs=n_epochs,

patience=patience, factor=factor)

return min(history["valid_metrics"]) * 1e6

LSTM module

This model unrolls an LSTMCell over the time dimension (many-to-one). It takes the final hidden state and maps it to the output.

class LstmModel(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super().__init__()

self.hidden_size = hidden_size

self.memory_cell = nn.LSTMCell(input_size, hidden_size)

self.output = nn.Linear(hidden_size, output_size)

def forward(self, X):

batch_size, window_length, dimensionality = X.shape

X_time_first = X.transpose(0, 1)

H = torch.zeros(batch_size, self.hidden_size, device=X.device)

C = torch.zeros(batch_size, self.hidden_size, device=X.device)

for X_t in X_time_first:

H, C = self.memory_cell(X_t, (H, C))

return self.output(H)

```

Input X (batch, T, input_size)

┌───────────────────────────────────────────────────────────┐

│ Time-first transpose → X_time_first (T, batch, input_size) │

└───────────────────────────────────────────────────────────┘

│

t = 1 │ t = 2 … t = T

▼

X_1 ──► [ LSTMCell ] ──► H_1,C_1

│

X_2 ──► [ LSTMCell ] ──► H_2,C_2

│

… [ LSTMCell ] …

│

X_T ──► [ LSTMCell ] ──► H_T,C_T

│

▼

take final hidden H_T (batch, hidden_size)

│

▼

Linear(hidden_size → output_size)

│

▼

y_pred (batch, output_size)

```

- `LSTMCell` runs one step at a time; `nn.LSTM` can process all steps at once (faster on GPU).

- Many-to-one setup: single output per sequence using the last hidden state.

- For many-to-many tasks, apply a head to each time step (e.g., over all hidden states).

Train the Model

torch.manual_seed(42)

Lstm_model = LstmModel(

input_size=5, hidden_size=32, output_size=2).to(device)

Lstm_model = Lstm_model.to(device)

fit_and_evaluate(Lstm_model, mulvar_train_loader, mulvar_valid_loader, lr=0.05, n_epochs=50)

Epoch 1/50, train loss: 0.0675, train metric: 0.3049, valid metric: 0.2044

Epoch 2/50, train loss: 0.0184, train metric: 0.1556, valid metric: 0.1573

Epoch 3/50, train loss: 0.0104, train metric: 0.1184, valid metric: 0.0974

...

Epoch 47/50, train loss: 0.0013, train metric: 0.0364, valid metric: 0.0265

Epoch 48/50, train loss: 0.0013, train metric: 0.0365, valid metric: 0.0258

Epoch 49/50, train loss: 0.0013, train metric: 0.0363, valid metric: 0.0305

Epoch 50/50, train loss: 0.0013, train metric: 0.0368, valid metric: 0.0266

Evaluate the model on test dataset

# Evaluate the trained LSTM model on the test set

metric = torchmetrics.MeanAbsoluteError().to(device)

test_mae = evaluate_tm(Lstm_model, mulvar_test_loader, metric).item()

print(f"Test MAE: {test_mae:.6f} ({test_mae*1e6:.2f} riders)")

Test MAE: 0.134912 (134911.54 riders)

Predicting future date

Lets print the last 5 data rows from our data set

# Also show the last 5 rows for context

print("\nLast 5 rows:")

print(df_mulvar.tail(5))

Last 5 rows:

rail bus next_day_type_A next_day_type_U \

date

2021-11-26 0.189694 0.257700 1.0 0.0

2021-11-27 0.187065 0.237839 0.0 1.0

2021-11-28 0.147830 0.184817 0.0 0.0

2021-11-29 0.276090 0.421322 0.0 0.0

2021-11-30 0.302349 0.450230 0.0 0.0

next_day_type_W

date

2021-11-26 0.0

2021-11-27 0.0

2021-11-28 1.0

2021-11-29 1.0

2021-11-30 0.0

'W' (weekday)

'A' (Saturday)

'U' (Sunday/holiday)

Lets do prediction for W(Weekday)

We will create a single instance of time series data using a sliding window of 56 to feed into the RNN model. The one-hot encoding for the "next_day_type_W" in the last row has been modified to "1" for weekday. This change is made to predict the output ( y ) for the next day for both train and bus riders.

# Predict next day's ridership using the last window and a chosen day type

try:

future_day_type = 'W' # options: 'W' (weekday), 'A' (Saturday), 'U' (Sunday/holiday)

# Build the window (use defined window_length or default to 56)

window_len = window_length if 'window_length' in globals() else 56

X_window = df_mulvar.tail(window_len).values.copy()

print(f"Window shape: {X_window.shape}")

# Set the last row's day-type one-hot to the chosen future type

X_window[-1, 3] = 1.0

print(f"Modified last row of window for future day type '{future_day_type}':")

print(X_window[-1])

# Predict

Lstm_model.eval()

with torch.no_grad():

X_t = torch.FloatTensor(X_window).unsqueeze(0).to(device) # (1, T, 5)

print(f"Input tensor shape for prediction: {X_t.shape}")

y_pred = Lstm_model(X_t).squeeze(0).cpu().numpy()

rail_pred_m, bus_pred_m = float(y_pred[0]), float(y_pred[1])

last_date = df_mulvar.index[-1]

future_date = pd.to_datetime(last_date) + pd.Timedelta(days=1)

print(f"Future date: {future_date.date()} (day type={future_day_type})")

print(f"Predicted (millions): rail={rail_pred_m:.6f}, bus={bus_pred_m:.6f}")

print(f"Predicted (riders): rail={rail_pred_m*1e6:.0f}, bus={bus_pred_m*1e6:.0f}")

except NameError as e:

print("Required variables not defined. Ensure df_mulvar, window_length, device, and Lstm_model exist.")

print("Error:", e)

except Exception as e:

print("Prediction failed:", e)

Window shape: (56, 5)

Modified last row of window for future day type 'W':

[0.302349 0.45023 0. 0. 1. ]

Input tensor shape for prediction: torch.Size([1, 56, 5])

Future date: 2021-12-01 (day type=W)

Predicted (millions): rail=0.481875, bus=0.560757

Predicted (riders): rail=481875, bus=560757

Predicted (millions): rail=0.481875, bus=0.560757

Predicted (riders): rail=481875, bus=560757