Seq2Seq Encoder-Decoder Model

The Seq2Seq (Sequence to Sequence) architecture is a highly advanced design in neural networks that underpins numerous complex tasks across various fields, particularly in natural language processing. Its significance is especially evident in applications such as language translation, text summarization, and conversational AI. The architecture is structured around two fundamental components: the encoder and the decoder. These components are frequently constructed using Long Short-Term Memory (LSTM) networks, although alternative structures like Gated Recurrent Units (GRUs) may also be employed for specific scenarios.

Encoder: The encoder acts as the foundational stage of the Seq2Seq model, dedicated to the meticulous processing of the input sequence. This input can consist of anything from a simple sentence to expansive blocks of text, and its complexity requires careful handling. The primary role of the encoder is to convert this input into a fixed-size context vector—a compact but rich abstract representation that captures the most imperative information from the entire input sequence. This context vector is meticulously crafted to encapsulate the essential features and semantic nuances of the original input, thus empowering the decoder to interpret the information effectively. Typically, the encoder is organized in layers of LSTM cells, which synergistically collaborate to absorb and retain patterns over time. This design adeptly navigates the intricacies of sequential data, enabling it to learn from prior inputs while accounting for their contextual significance.

Decoder: Following the encoder, the decoder takes on the task of outputting the desired sequence. It begins its operation with the context vector created by the encoder, using it as a springboard to generate outputs one element at a time—this could be a word, token, or any other applicable unit of information, depending on the specific application. At each step of the decoding process, the model integrates not only the context vector but also the elements it has previously produced. This integration is crucial; it enables the decoder to maintain coherence and relevance throughout the generated sequence. Thanks to the sequential nature of this decoding process, the architecture is capable of producing output that feels more natural and contextually appropriate, making it particularly effective for tasks like language translation and chatbot interactions.

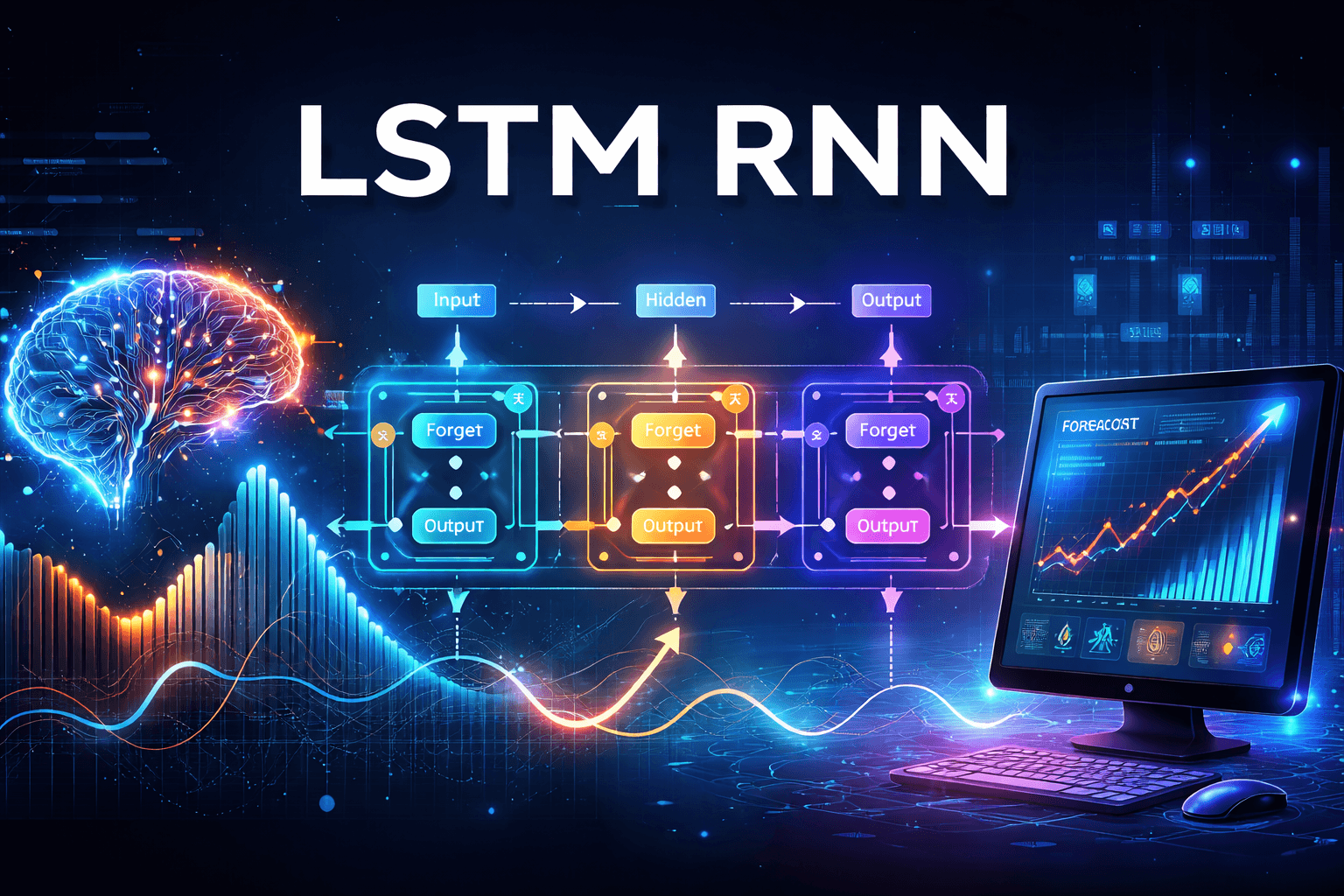

The LSTM architecture is particularly well-suited for Seq2Seq tasks due to its exceptional ability to learn and remember long-term dependencies in data. Traditional recurrent neural networks (RNNs) often encounter difficulties with the vanishing gradient problem, which can prevent them from effectively capturing information from earlier parts of the sequence. LSTMs circumvent this challenge through the strategic use of gates—specifically, input, output, and forget gates. These gates meticulously regulate the flow of information, allowing LSTMs to keep hold of crucial details while discarding less relevant information as the sequence unfolds. This capability is vital for tasks requiring nuanced contextual understanding over extended sequences, such as comprehending lengthy sentences or analyzing multi-sentence paragraphs.

Seq2Seq architecture, particularly when integrated with LSTM networks, presents a robust framework for transforming one sequence into another with remarkable efficiency and accuracy. By encoding input information into a detailed and comprehensive context vector and subsequently decoding it into a desired output format, this architecture facilitates applications that necessitate high levels of precision and contextual sensitivity. As a result, it stands as an indispensable tool in the realm of natural language processing and is invaluable across numerous other domains.

Seq2Seq Encoder part

We are developing an Encoder-Decoder model designed to translate the English phrase "I am" into its Spanish equivalent, "soy." The process begins with the creation of an embedding layer that captures the relationships between different words in our input vocabulary. This layer outputs a dense representation of the phrase, which will serve as the input for our Long Short-Term Memory (LSTM) network.

To effectively manage the flow of information, we initialize both the long-term and short-term memory states of the LSTM. As we proceed, we unroll the LSTM, which involves unfolding the network across the sequence of inputs. This unrolling process allows us to maintain consistent weights and biases across each time step.

During the operation of the LSTM, we perform a series of calculations to determine the cell state, which maintains information over long sequences, and the hidden state, which carries short-term information. These states allow the network to retain relevant context and understand the relationships between different parts of the input.

Ultimately, we generate a context vector that encapsulates both the long-term and short-term memories produced by the encoder. This context vector is crucial as it serves as the foundational input for the decoder, guiding it to accurately produce the corresponding translation in Spanish.

Following calculations gets done in encoder and decoder

Forget Gate

Candidate Value

Update gate

Output gate

Cell state

Hidden state

Forget gate

\(\mathbf{\Gamma}_f^{\langle t \rangle} = \sigma(\mathbf{W}_f[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_f)\tag{1}\)

The previous time step's hidden state \(a^{\langle t-1 \rangle}\)and current time step's input \(x^{\langle t \rangle}\)are concatenated together and multiplied by \(\mathbf{W_{f}}\)

Candidate value

\(\mathbf{\tilde{c}}^{\langle t \rangle} = \tanh\left( \mathbf{W}_{c} [\mathbf{a}^{\langle t - 1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{c} \right) \tag{3}\)

The candidate value is a tensor containing information from the current time step that may be stored in the current cell state \(\mathbf{c}^{\langle t \rangle}.\)

The parts of the candidate value that get passed on depend on the update gate.

Update gate

\(\mathbf{\Gamma}_i^{\langle t \rangle} = \sigma(\mathbf{W}_i[a^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_i)\tag{2}\)

Update gate decides what aspects of the candidate \(\tilde{\mathbf{c}}^{\langle t \rangle}\)to add to the cell state \(c^{\langle t \rangle}\)

The update gate decides what parts of a "candidate" tensor \(\tilde{\mathbf{c}}^{\langle t \rangle}\)are passed onto the cell state \(\mathbf{c}^{\langle t \rangle}\)

Output gate

\(\mathbf{\Gamma}_o^{\langle t \rangle}= \sigma(\mathbf{W}_o[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{o})\tag{5}\)

The output gate decides what gets sent as the prediction (output) of the time step.

Cell state

\(\mathbf{c}^{\langle t \rangle} = \mathbf{\Gamma}_f^{\langle t \rangle}* \mathbf{c}^{\langle t-1 \rangle} + \mathbf{\Gamma}_{i}^{\langle t \rangle} *\mathbf{\tilde{c}}^{\langle t \rangle} \tag{4}\)

The cell state is the "memory" that gets passed onto future time steps

The previous cell state \(\mathbf{c}^{\langle t-1 \rangle}\)is adjusted (weighted) by the forget gate \(\mathbf{\Gamma}_{f}^{\langle t \rangle}\)and the candidate value \(\tilde{\mathbf{c}}^{\langle t \rangle}\). adjusted (weighted) by the update gate \(\mathbf{\Gamma}_{i}^{\langle t \rangle}\)

Hidden state

\(\mathbf{a}^{\langle t \rangle} = \mathbf{\Gamma}_o^{\langle t \rangle} * \tanh(\mathbf{c}^{\langle t \rangle})\tag{6}\)

The hidden state gets passed to the LSTM cell's next time step.

The hidden state \(\mathbf{a}^{\langle t \rangle}\)is determined by the cell state \(\mathbf{c}^{\langle t \rangle}\) in combination with the output gate \(\mathbf{\Gamma}_{o}\)

Seq2Seq Decoder part

The process begins with the encoder generating a context vector from the initial phrase "I am." This context vector serves as a crucial starting point, as it initializes both the long-term and short-term memory components of the Long Short-Term Memory (LSTM) network in the decoder.

As the decoding process commences, the input to the LSTM is set using the embedding value of the end-of-sequence token, denoted as <EOS>. This value is sourced from an embedding layer that has been trained to represent the output vocabulary.

Within the LSTM, the short-term memory undergoes processing and is subsequently fed into a fully connected dense layer. This dense layer applies a softmax activation function, which plays a critical role in determining the first word that the decoder will output. In this instance, the generated word is "soy."

However, the decoding journey is not complete with the generation of "soy." The decoder continues to unroll, using the last generated word as the input for the embedding layer for the subsequent LSTM cycle. Once again, the same calculations are performed to update both long-term and short-term memory. This information then flows into the dense layer, followed by the application of the softmax function. This iterative process continues until the decoder finally produces the end-of-sequence ,<EOS> token, signaling that the generation is complete.