Implementing NLP using Word Embeddings from Pre-trained GloVe

Natural Language Processing (NLP) is a fascinating field of artificial intelligence that focuses on the interaction between computers and human language. It involves the development of algorithms and models that enable machines to understand, interpret, and generate human language in a way that is both meaningful and useful. NLP combines linguistics, computer science, and machine learning to facilitate tasks such as speech recognition, sentiment analysis, language translation, and chatbot functionality. This technology underpins various applications, from virtual assistants to automated customer service systems, and continues to evolve as it tackles complex challenges in understanding context, nuances, and the subtleties of human communication.

Deep learning models are designed to work with numeric tensors, meaning they cannot directly process text data. To enable these models to understand and learn from textual information, we must convert the text into a numerical format. This process is known as vectorization.

Vectorizing text involves transforming words, sentences, or entire documents into a numerical representation, often by using techniques such as word embeddings, one-hot encoding, or other methods that map textual data to numerical vectors. This allows the deep learning models to analyze and extract patterns from the text, ultimately enhancing their ability to perform tasks such as classification, sentiment analysis, or language translation. Steps to Process Text Data:

Standardize the Text:

Begin by converting all characters in the text to lowercase to ensure uniformity in processing.

Remove any punctuation marks, such as commas, periods, exclamation points, etc., to eliminate distractions and maintain focus on the words themselves.

Tokenization:

- Split the standardized text into smaller units known as tokens. This typically involves separating the text into individual words or phrases, which allows for easier analysis and manipulation.

Vectorization:

- Convert each token into a numeric vector. This process involves representing each token as a numerical array, making it easier to perform calculations and apply various algorithms in data analysis or machine learning. Common methods for vectorization include one-hot encoding, term frequency-inverse document frequency (TF-IDF), and word embeddings.

By following above detailed steps, we can effectively prepare text data for further analysis or processing

.Lets Start with downloading the IMDB movie reviews data

!curl -O https://ai.stanford.edu/~amaas/data/sentiment/aclImdb_v1.tar.gz

!tar -xf aclImdb_v1.tar.gz

!rm -r aclImdb/train/unsup

We will split the train data to validation data of 20%

import os, pathlib, shutil, random

from tensorflow import keras

batch_size = 32

base_dir = pathlib.Path("aclImdb")

val_dir = base_dir / "val"

train_dir = base_dir / "train"

for category in ("neg", "pos"):

os.makedirs(val_dir / category)

files = os.listdir(train_dir / category)

random.Random(1337).shuffle(files)

num_val_samples = int(0.2 * len(files))

val_files = files[-num_val_samples:]

for fname in val_files:

shutil.move(train_dir / category / fname,

val_dir / category / fname)

train_ds = keras.utils.text_dataset_from_directory(

"aclImdb/train", batch_size=batch_size

)

val_ds = keras.utils.text_dataset_from_directory(

"aclImdb/val", batch_size=batch_size

)

test_ds = keras.utils.text_dataset_from_directory(

"aclImdb/test", batch_size=batch_size

)

Lets print one of the reviews from dataset loaded

for text_batch, label_batch in train_ds.take(1):

for i in range(1):

print("Review", text_batch.numpy()[i])

print("Label", label_batch.numpy()[i])

Review b"God I love this movie. If you grew up in the 80's and love Heavy Metal, this is the Movie for you. They really don't get much better than this. The Fastway soundtrack is one of the best soundtracks ever. I put on the record when it first came out and spent the next month learning every song on guitar note for note. The plot outline is your standard Heavy Metal horror movie. Kid's favorite singer dies. Kid plays record backwards. Hero comes back in demonic form and rocks the town. What more could you ask for?<br /><br />If you haven't seen it yet, rush out and buy it. You will not be disappointed. Metal Rules..."

Label 1

text_only_train_ds = train_ds.map(lambda x, y: x)

We will utilize the Text Vectorization layer provided by Keras, which serves as a preprocessing tool designed to transform text data into a format suitable for machine learning models. This layer is responsible for mapping textual features into integer sequences, effectively converting each word or phrase into a corresponding numerical representation. By doing this, we can prepare our text data for further processing and analysis, allowing for more efficient and effective model training. The Text Vectorization layer also offers options for configuring parameters such as vocabulary size and output sequence length, helping to tailor the representation of text data to the specific needs of our application.

from tensorflow.keras import layers

max_length = 600

max_tokens = 20000

text_vectorization = layers.TextVectorization(

max_tokens=max_tokens,

output_mode="int",

output_sequence_length=max_length,

)

text_vectorization.adapt(text_only_train_ds)

int_train_ds = train_ds.map(

lambda x, y: (text_vectorization(x), y),

num_parallel_calls=4)

int_val_ds = val_ds.map(

lambda x, y: (text_vectorization(x), y),

num_parallel_calls=4)

int_test_ds = test_ds.map(

lambda x, y: (text_vectorization(x), y),

num_parallel_calls=4)

.adapt method indexes the vocabulary

Pretrained Embedding matrix

An embedding matrix that has been pre-trained consists of a grid of vectors representing words or tokens from a specific language or domain. Each row corresponds to a unique word, where the values in the vector capture semantic information, allowing similar words to have similar representations. This matrix can be utilized in various natural language processing tasks to enhance model performance by leveraging learned linguistic features from large corpora.

Lets first download the Globar Vector pretrained vector matrix and then unzip it and read in your code

!curl -O http://nlp.stanford.edu/data/glove.6B.zip

When we load the file

import numpy as np

path_to_glove_file = "data/glove.6B.100d.txt"

embeddings_index = {}

with open(path_to_glove_file) as f:

for line in f:

word, coefs = line.split(maxsplit=1)

coefs = np.fromstring(coefs, "f", sep=" ")

embeddings_index[word] = coefs

print(f"Found {len(embeddings_index)} word vectors.")

Found 400000 word vectors.

The Glove 100d model is a 100-dimensional word vector matrix that encompasses a comprehensive vocabulary of 40,000 distinct words. Each word is represented as a vector in a high-dimensional space, allowing for the capture of semantic relationships and meanings based on their usage in various contexts. This representation enables advanced natural language processing tasks by measuring the similarities and differences between words, thereby enhancing understanding in applications such as text analysis, sentiment detection, and more.

Printing element will look like

for key, value in embeddings_index.items():

print(f"{key}: {value}")

break;

the: [-0.038194 -0.24487 0.72812 -0.39961 0.083172 0.043953 -0.39141

0.3344 -0.57545 0.087459 0.28787 -0.06731 0.30906 -0.26384

-0.13231 -0.20757 0.33395 -0.33848 -0.31743 -0.48336 0.1464

-0.37304 0.34577 0.052041 0.44946 -0.46971 0.02628 -0.54155

-0.15518 -0.14107 -0.039722 0.28277 0.14393 0.23464 -0.31021

0.086173 0.20397 0.52624 0.17164 -0.082378 -0.71787 -0.41531

0.20335 -0.12763 0.41367 0.55187 0.57908 -0.33477 -0.36559

-0.54857 -0.062892 0.26584 0.30205 0.99775 -0.80481 -3.0243

0.01254 -0.36942 2.2167 0.72201 -0.24978 0.92136 0.034514

0.46745 1.1079 -0.19358 -0.074575 0.23353 -0.052062 -0.22044

0.057162 -0.15806 -0.30798 -0.41625 0.37972 0.15006 -0.53212

-0.2055 -1.2526 0.071624 0.70565 0.49744 -0.42063 0.26148

-1.538 -0.30223 -0.073438 -0.28312 0.37104 -0.25217 0.016215

-0.017099 -0.38984 0.87424 -0.72569 -0.51058 -0.52028 -0.1459

0.8278 0.27062 ]

The word “the” has vector representation in numeric form.

Now from our loaded Text Vectorization we will create word_index

embedding_dim = 100

vocabulary = text_vectorization.get_vocabulary()

word_index = dict(zip(vocabulary, range(len(vocabulary))))

for key, value in word_index.items():

print(f"{key}: {value}")

break;

: 0

We will initialize the Embedding matrix of dimension max_tokens, embedding_dim (20000,100)

embedding_matrix = np.zeros((max_tokens, embedding_dim))

for word, i in word_index.items():

if i < max_tokens:

embedding_vector = embeddings_index.get(word)

if embedding_vector is not None:

embedding_matrix[i] = embedding_vector

Let's utilize the Keras embedding layer to incorporate our pre-trained embedding matrix. First, we'll load the embedding matrix we have created, which contains the word vectors for our vocabulary. Then, we will configure the Keras embedding layer to use this matrix, ensuring that it properly maps our input indices to the corresponding dense vector representations. This setup allows us to leverage the semantic meanings captured in the embedding matrix, enhancing the performance of our model.

embedding_layer = layers.Embedding(

max_tokens,

embedding_dim,

embeddings_initializer=keras.initializers.Constant(embedding_matrix),

trainable=False,

mask_zero=True,

)

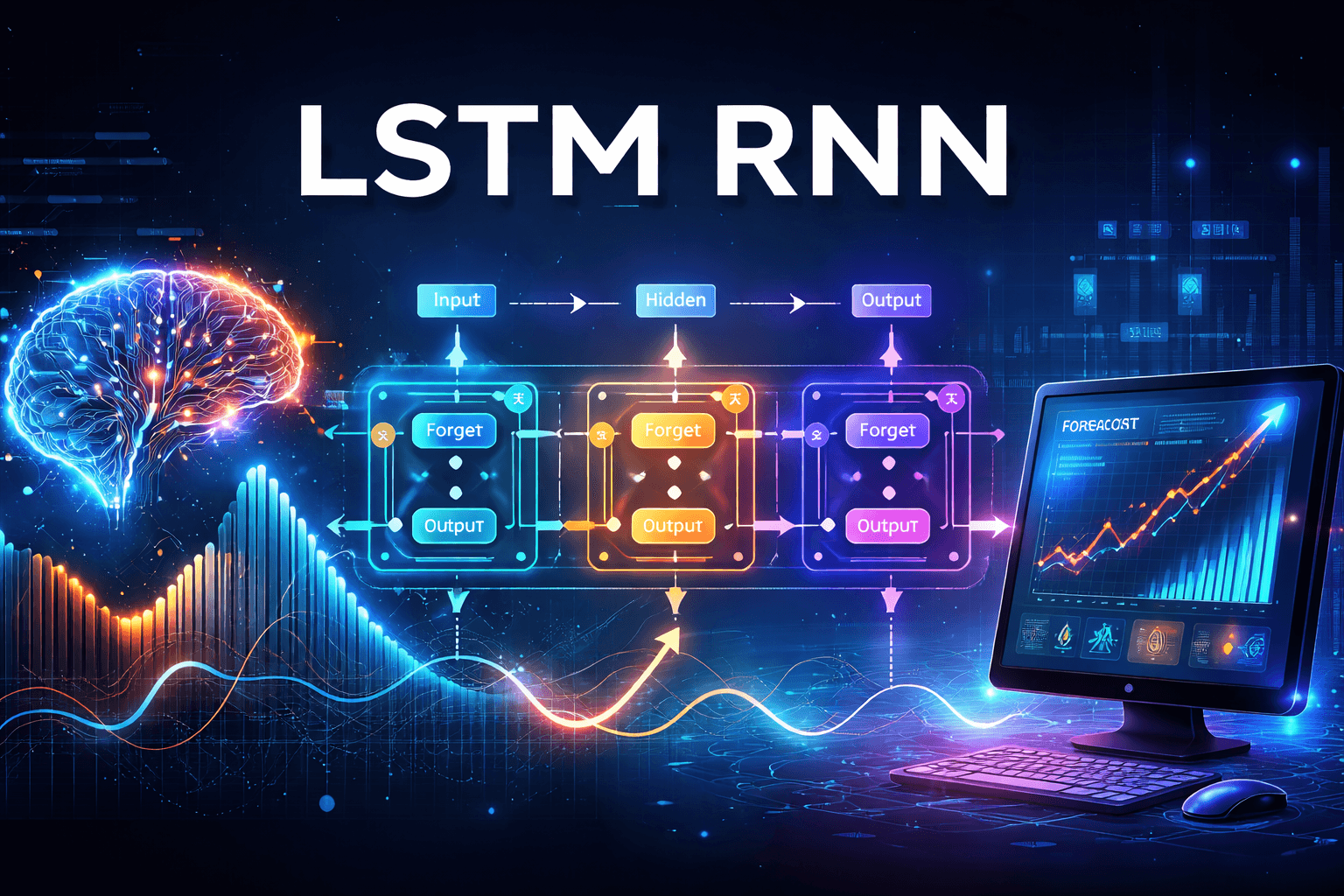

Let's construct a Bidirectional Long Short-Term Memory (LSTM) Recurrent Neural Network (RNN) model. This model will be designed to leverage the advantages of bidirectional processing, allowing it to consider input sequences from both past and future contexts simultaneously. To enhance the model's performance and prevent overfitting, we will incorporate dropout regularization. Finally, we will conclude the model with a single dense layer that will serve as the output layer, enabling us to make predictions based on the features extracted by the LSTM layers.Let's develop a Bidirectional LSTM RNN model that incorporates dropout for regularization and includes a single dense layer for output processing.

inputs = keras.Input(shape=(None,), dtype="int64")

embedded = embedding_layer(inputs)

x = layers.Bidirectional(layers.LSTM(32))(embedded)

x = layers.Dropout(0.5)(x)

outputs = layers.Dense(1, activation="sigmoid")(x)

model = keras.Model(inputs, outputs)

model.compile(optimizer="rmsprop",

loss="binary_crossentropy",

metrics=["accuracy"])

model.summary()

callbacks = [

keras.callbacks.ModelCheckpoint("glove_embeddings_sequence_model.keras",

save_best_only=True)

]

model.fit(int_train_ds, validation_data=int_val_ds, epochs=10, callbacks=callbacks)

model = keras.models.load_model("glove_embeddings_sequence_model.keras")

print(f"Test acc: {model.evaluate(int_test_ds)[1]:.3f}")

We get a test accuracy of 87%